EXPERIMENT OVERVIEW

The TERIYAKI experiment takes place in a manufacturing SME operating in textile printing, where task coordination, quality assurance and production tracking are currently manual and experience-driven. This leads to inefficiencies, variability in quality control, and limited use of production data for performance monitoring and improvement.

The goal of the experiment is to design, implement and validate a modular Digital Intelligent Assistant (DIA) for manufacturing environments, addressing the above issues. The system will integrate voice-enabled interaction, intelligent task coordination, computer vision-based quality assurance and structured performance data collection into a unified solution. The experiment will demonstrate how such an assistant can be deployed in a real SME production setting using open, interoperable technologies and a containerised architecture.

TERIYAKI DIA components & architecture

The TERIYAKI DIA will be composed of four interconnected functional components designed to address the current inefficiencies and limited data visibility within the production environment.

First, a voice interaction component will be the main DIA interface, enabling workers to communicate with the system using natural speech, reducing reliance on manual reporting and tracking. This component will handle speech recognition, intent detection and spoken feedback, supporting hands-free and structured task logging. Furthermore, a computer vision-based quality assurance module will capture product images, during manufacturing, and run a tailored computer vision model to detect quality defects in near real-time. This capability will aim to reduce inspection variability and strengthen consistency in quality control while maintaining human oversight. Moreover, a task coordination module will manage production orders by organising, sequencing and assigning tasks based on predefined workflows and available resources, supporting more structured coordination across concurrent activities. Finally, an analytics and visualisation component will collect structured production data such as task durations, defect rates and workload distribution, presenting them through an intuitive dashboard to enable improved performance monitoring and data-driven decision-making over time.

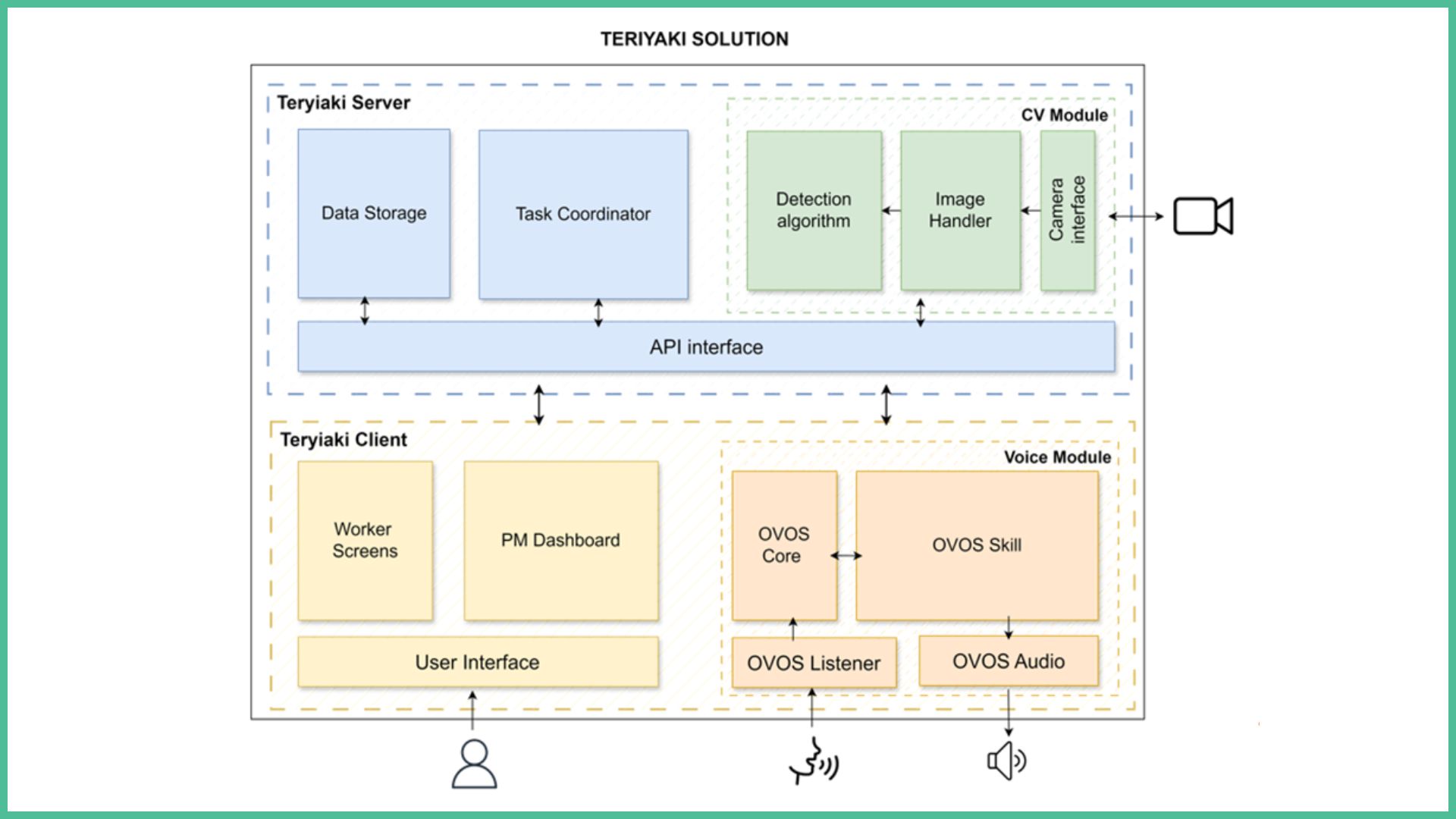

Architecturally, the system will be divided into a client layer and a server layer (see Figure 1 below). The client layer will host the user interfaces and voice services, while the server layer contains the task coordination logic, data storage and computer vision processing pipeline. Communication between layers will be handled through an application programming interface (API), enabling modular upgrades and potential replication in other manufacturing environments.

TERIYAKI Operational Scope and Use Case Definition

The following analysis defines the functional boundaries and operational scenarios that will drive the design, development and validation of the TERIYAKI DIA. This structured definition establishes the core system capabilities to be implemented, clarifies the roles and responsibilities of the actors interacting with the system, and defines the concrete operational scenarios that will be validated throughout development and testing.

The tables below therefore act as a formal bridge between system architecture, implementation planning and experimental validation activities.

Table 1. Teriyaki core system functionalities

|

ID |

Core System Functionality |

Description |

|

F1 |

Voice-Enabled Human–System Interaction |

Wake-word activation, speech-to-text, intent recognition and real-time verbal feedback between workers and the DIA. |

|

F2 |

Automatic CV-Backed Quality Assurance |

HD image capture, template alignment, defect detection, heatmap generation, quality scoring, and escalation of borderline cases. |

|

F3 |

Intelligent Task Coordination, Optimization & Control |

Assisted task scheduling considering worker availability, order priorities and resource constraints, with PM review and control. |

|

F4 |

Production Performance Analytics & Visualization |

Performance logging (defect rates, task durations and more) and dashboard-based visualization for monitoring and decision support. |

The four functionalities listed on the above table define the technological and operational scope of the experiment. Each subsequent use case references one or more of these functionalities to ensure traceability between requirements, development and testing. In the table below, the types of actors that interact with the system during the experiment are detailed:

Table 2. Teriyaki system actors

|

ID |

Actor Name |

Description |

DIA Interactions |

|

ACT1 |

Screen Printing Specialist (SPS) |

Operator responsible for executing printing tasks on membrane switches. |

Start/end printing tasks via voice; Listen to real-time defect alerts during printing; Continue or adjust printing based on system feedback. |

|

ACT2 |

Hybrid Professional (HP) |

Operator responsible for non-printing production tasks and final QA operations. |

Start/end assigned tasks via voice; Execute final QA tasks; Receive QA feedback; Escalate issues if required. |

|

ACT3 |

Production Manager (PM) |

Supervisor responsible for order creation, oversight and decision-making. |

Create production orders via UI; Review scheduling proposals; Monitor production performance via PM dashboard; Review borderline QA cases; |

Finally, the use cases presented in Table 3 below will be the basis of the primary interaction scenarios that will be executed and validated during the experiment. They demonstrate how voice interaction, automated quality inspection, task coordination and performance logging operate together within real production workflows.

Table 3. The core Teriyaki use cases

|

Use Case ID |

Title |

Description |

Actor |

Involved Functionalities |

|

UC1 |

Voice-Based Task Execution & Logging |

A HP issues a voice command (e.g., “TERIYAKI start task X” / “TERIYAKI finish task X”) to start or complete a production task; the DIA registers the task and logs its execution. |

HP |

F1, F4 |

|

UC2 |

In-Process QA automation |

A SPS issues a voice command (e.g., “TERIYAKI start printing product 579 red”); the DIA activates the in-process QA inspection pipeline, which issues alerts when a defect is found. |

SPS |

F1, F2, F4 |

|

UC3 |

Final QA automation |

A HP issues a voice command (e.g., “TERIYAKI start final QA product 579”); the DIA activates the final QA inspection pipeline, which issues alerts when a defect is found. |

HP |

F1, F2, F4 |

|

UC4 |

Production Order Setup & Oversight |

A PM creates a new production order via the PM Dashboard; the DIA generates the corresponding task plan and provides production-related data visualization. |

PM |

F3, F4 |

TERIYAKI Implementation, Testing and Evaluation Phases

The functional scope, actors and operational use cases, defined above, form the basis for the evaluation and validation framework of the experiment. These elements will be further refined into structured user stories guiding development activities and internal verification procedures. Moreover, a set of System Integration Tests (SITs) and User Acceptance Tests (UATs) will be defined for the different validation phases.

During the first implementation phase (M2-M6), the user stories will drive internal testing cycles to ensure traceability between defined functionalities and technical integration. The SITs will be conducted to validate the first fully integrated version of the DIA, marked as a project milestone (MS2) in Month 6. At this stage, the interaction layer, computer vision module, task coordination logic and analytics component will be deployed together for the first time within the factory environment. Following this milestone, the period from Month 7 to Month 10 will focus on stabilising integrations across modules, enhancing the CV algorithm, and improving overall system robustness. During the same period, the Web3-related capabilities, including the NFT module, will be integrated alongside the technical preparation for packaging and publication within the WASABI marketplace environment.

The core TERIYAKI evaluation will take place during Months 10–12, encompassing the execution of the UATs and the measurement of defined KPIs following the completion of training activities for the involved production personnel. This phase will assess usability, operational compatibility, system stability and overall performance under real production conditions, ensuring that the TERIYAKI DIA operates effectively within everyday workflows. During the same period, WASABI marketplace evaluation activities will be conducted to validate the assistant’s containerised packaging, interoperability compliance and Web3-enabled components prior to formal publication.

Demonstration activities to the WASABI consortium will be coordinated according to the maturity level reached at each point in the timeline. Depending on the phase of the project, demonstrations may range from technical integration walkthroughs and backend processing showcases to application interface demonstrations and live operational scenarios within the production environment.

The experiment addresses several interrelated operational and technical challenges within a small manufacturing environment where production coordination and quality assurance processes are largely experience-driven and manually executed. Production orders are managed through human supervision, while visual inspections depend heavily on skilled personnel. This creates bottlenecks, variability in inspection consistency and limited structured data for performance analysis. The challenge is not only technological but also organisational, as any introduced system must integrate into existing workflows without disrupting productivity.

From an operational perspective, task coordination across multiple concurrent orders requires continuous managerial oversight. In environments with limited staff, this can lead to suboptimal task sequencing and idle periods between production steps. Introducing a digital assistant must therefore balance automation with human control, ensuring that the system supports rather than overrides managerial decision-making.

Technically, the reliability of voice interaction in an industrial environment presents a significant challenge. Background noise from printers and machinery, short or ambiguous commands, worker accents and potential multi-device interference may reduce speech recognition accuracy. Ensuring robust wake-word activation, reliable intent detection and effective clarification mechanisms is essential to avoid operational disruption caused by misinterpreted commands.

Similarly, computer vision-based quality assurance introduces its own complexity. Variability in lighting conditions, camera positioning and image alignment can directly affect defect detection performance. The system must ensure stable hardware setup, controlled lighting, calibration procedures and threshold tuning to minimise false positives and false negatives while maintaining practical inspection speed.

Deployment within the factory environment presents additional constraints. The introduction of tablets, cameras and embedded computing equipment must not interfere with established production flows or workspace ergonomics. Hardware positioning and mounting structures must therefore be carefully designed to prevent operational disturbance.

Finally, organisational adoption and user trust represent critical challenges. Workers and managers must perceive the system as supportive rather than intrusive. Ensuring intuitive interaction, gradual integration and adequate training is essential to achieving sustainable adoption and long-term impact.

Currently, finding the right support tools for these specific environments is difficult for many SMEs. Traditional monitoring systems are often costly, technically complex or difficult to integrate into agile SME environments, while standard training materials and safety instructions tend to be static and provide no contextual or real-time support during work. Furthermore, generic voice assistants often rely on cloud processing, which raises concerns regarding latency, reliability and data privacy.

As a result, there is a lack of accessible, privacy-first technologies that align with operational realities and support worker wellbeing without compromising established processes or data protection expectations.

Objective 1:

Develop and integrate a fully operational Digital Intelligent Assistant that combines voice interaction, task coordination, computer vision-based quality assurance and analytics into a unified system, ensuring functional coherence and technical correctness across all modules.

Objective 2:

Evaluate and validate the Digital Intelligent Assistant within the real production environment of the manufacturing SME, executing pilot scenarios, measuring performance against defined indicators and assessing its operational feasibility and user acceptance.

Objective 3:

Prepare, publish and validate the Digital Intelligent Assistant within the WASABI marketplace ecosystem by packaging the solution according to marketplace requirements, integrating Web3-enabled capabilities and completing the necessary interoperability and compliance evaluations.

The experiment is positioned within the industrial electronics manufacturing sector, specifically focusing on membrane switch production. The primary goal is to integrate and test the TERIYAKI Digital Intelligent Assistant directly within real manufacturing conditions at the SKOUPAS production premises in Greece. The validation will take place under normal operating conditions, including screen printing, assembly and quality control, ensuring that the solution is assessed in an authentic industrial environment rather than a laboratory or simulated setting.

The main users involved in the experiment include the screen printing specialist, responsible for operating the printing process and interacting with the quality assurance system during production; the Production Manager, who oversees workflow coordination, scheduling, and prioritization across multiple production orders; and a hybrid professional role combining technical understanding with operational responsibilities, acting as a bridge between production staff and the digital solution deployment. These users will interact with the system through workflow coordination tools, computer-vision-assisted quality inspection, and voice-enabled task support.

Relevant stakeholders include company management evaluating operational efficiency improvements, technical partners responsible for solution development and integration, digital innovation support organizations facilitating the experiment, and potentially end customers interested in improved product consistency and traceability. These stakeholders contribute to defining requirements, validating outcomes, and assessing the broader applicability of the solution within manufacturing environments.

Several operational and technical constraints must be carefully addressed during the experiment. The computer-vision-supported quality assurance system operates in-process during screen printing and therefore requires high sensitivity and stability in image acquisition. Multiple variables may affect consistency and defect detection accuracy, including lighting conditions, camera alignment, surface reflections, vibration, print variability, and environmental factors. Ensuring reliable defect identification under these dynamic production conditions represents a critical technical challenge. In parallel, the voice-enabled interaction must function within a real factory environment characterized by background noise from printers and other machinery. Potential issues such as wake-word false positives or false negatives, worker accents, short command structures, and possible interference between multiple devices must be considered to maintain usability and operator trust. These constraints require robust calibration and careful system integration to ensure that TERIYAKI performs reliably without disrupting production continuity or operator workflow.

EXPECTED IMPACT

EXPECTED IMPACT

The TERIYAKI experiment is expected to generate measurable operational improvements within SKOUPAS’ membrane switch manufacturing environment by enhancing task coordination, CV based quality assurance, and workflow visibility under real production conditions. The primary impact will be demonstrated through improvements in production efficiency, reduction of waste, and enhanced decision-making support for production staff and management.

Operationally, the experiment aims to reduce average order lead time by approximately 18% compared to the current baseline. This reduction will reflect improved task prioritization, reduced waiting time between process steps, and faster and more reliable quality validation cycles. Scrap rate, currently averaging approximately 33% in critical stages, is expected to decrease to 25% through the implementation of computer-vision-assisted quality checks and earlier defect identification during screen printing. Additionally, worker idle time and scheduling time are both targeted for a reduction of approximately 20%, reflecting improved coordination and reduced dependency on centralized manual task allocation.

For end users within the factory environment — namely the screen printing specialist, Production Manager, and hybrid professional — the expected benefits include clearer task visibility, more structured workflow guidance, faster identification of print defects, and reduced need for repeated coordination exchanges. The Production Manager is expected to experience reduced cognitive load through improved workflow transparency and structured task support. Operators should benefit from more consistent inspection support and improved responsiveness during high-production periods.

For broader stakeholders, including company management and digital innovation partners, success will be measured through quantifiable improvements in efficiency and consistency, as well as validation of the Digital Intelligent Assistant in a real industrial setting. Demonstrating successful integration of voice interaction and in-process computer vision under real manufacturing constraints will strengthen scalability potential across similar small and medium-sized manufacturing environments.

From a sustainability perspective, scrap reduction directly contributes to lower material waste, reduced energy consumption per accepted product, and decreased rework cycles. Earlier defect detection limits value-added processing on defective units, minimizing unnecessary use of inks, substrates, laminates, and labour. Reduced idle time and improved scheduling efficiency also contribute indirectly to better resource utilization and optimized energy use across production operations.

GALLERY