SEQGEN-AI

EXPERIMENT OVERVIEW

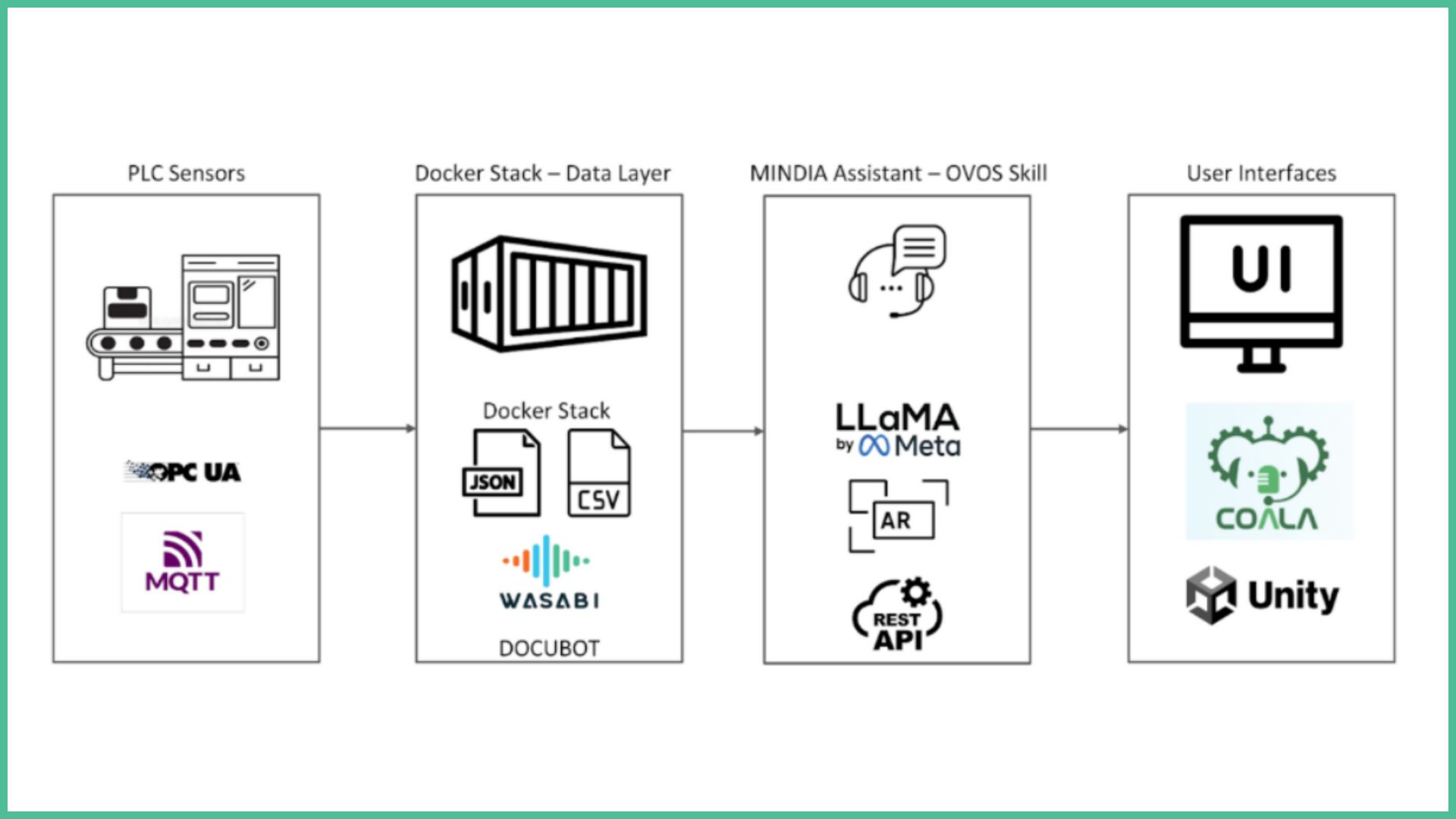

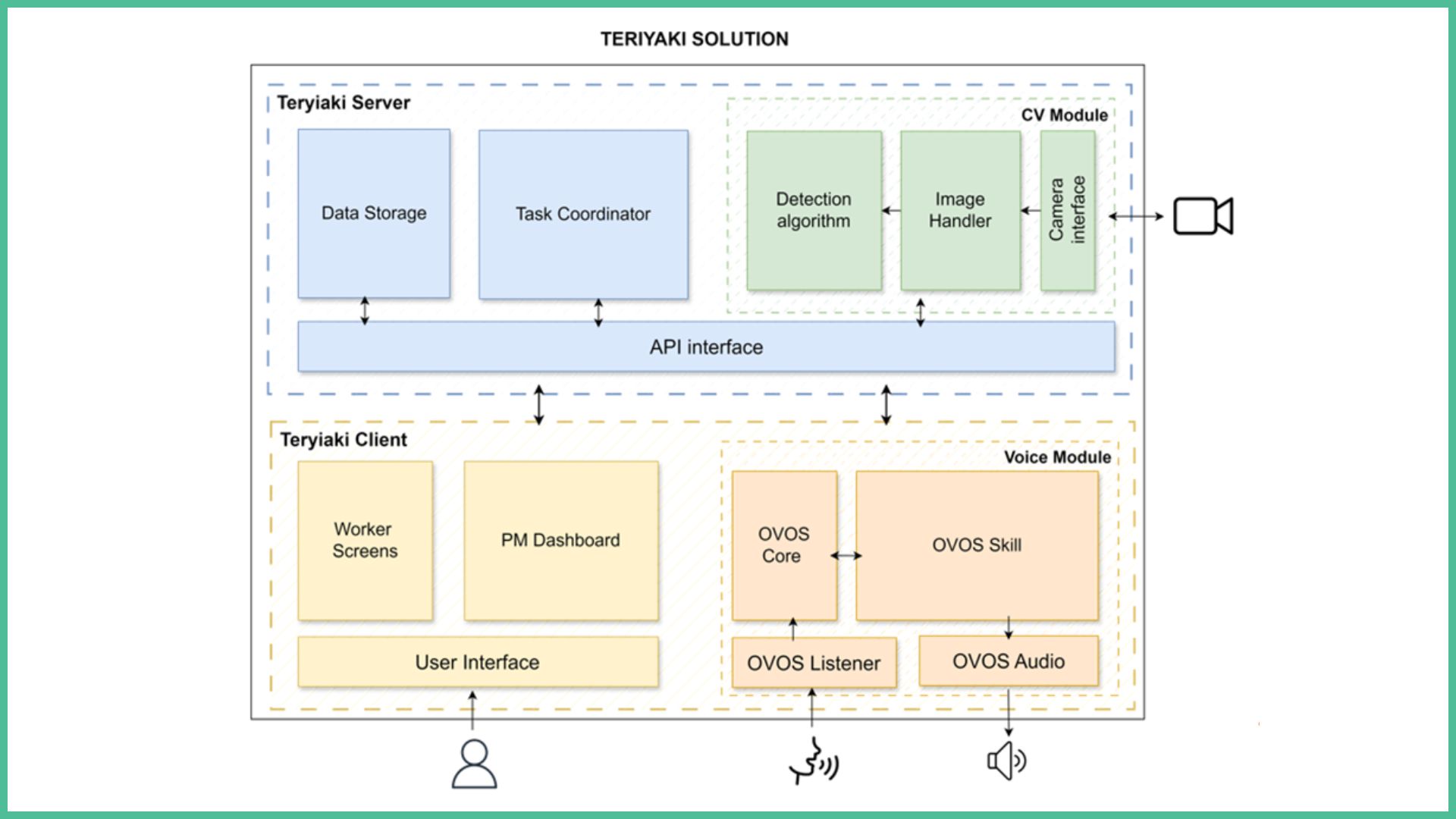

The experiment aims to improve the way industrial test procedures are created and executed by integrating a Digital Intelligent Assistant into the GEMS platform, an open-source software solution developed by Gemesis for test bench control and automation. GEMS is a modular software environment used to manage sensors and actuators, control real-time processes, implement safety procedures, and collect and analyse data in laboratory and production testing systems.

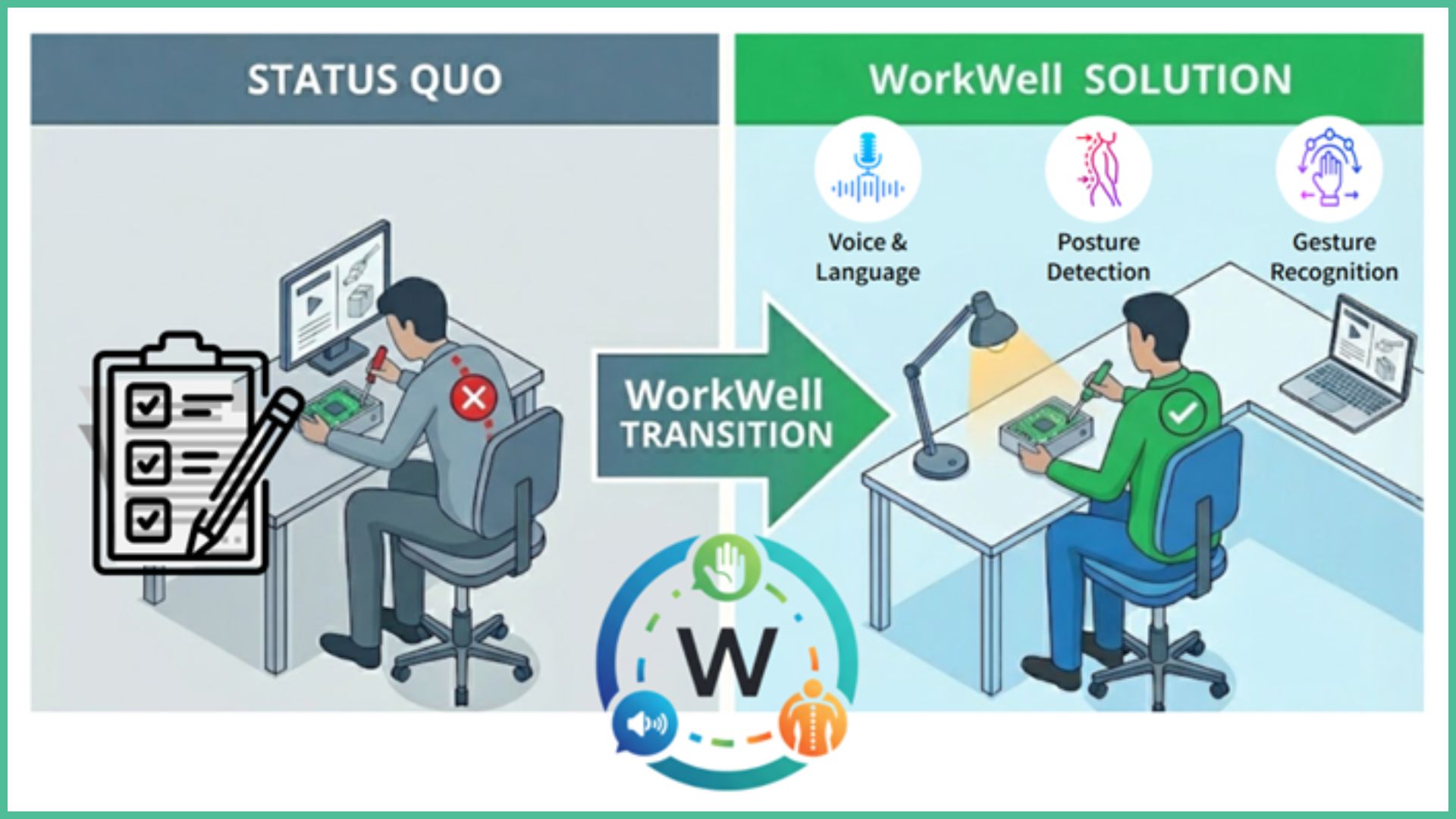

In many industrial sectors, including hydrogen technologies, battery systems, and advanced manufacturing components, test sequences are still created manually using spreadsheets or custom programming scripts. These sequences can be extremely long and complex, often containing thousands of steps with conditional logic, loops, and dependencies between measurements. The preparation of such procedures requires significant engineering expertise and time. As a result, the process is resource-intensive, prone to human error, and difficult to scale when new requirements or standards arise.

The experiment proposes the development and integration of a Digital Intelligent Assistant capable of transforming natural language descriptions or structured documents into executable test sequences. Engineers will be able to describe a desired test procedure using plain language or by uploading documents such as Excel files, technical specifications, or standards. The assistant will interpret this input and automatically generate a structured sequence compatible with the GEMS execution environment.

The core artificial intelligence technology is provided by ThinkDeep through its DeepBrain platform, which is based on large language model technology. This platform is designed to interpret technical content and structure it into formalised, machine-readable instructions. The generated test sequence is then transferred to GEMS, where it can be visualised, edited, and validated before execution.

A key component of the experiment is the development of a visual sequence viewer by Gemesis. This viewer will display the generated test steps in a clear, time-based representation, allowing engineers to review the logic, dependencies, and transitions between phases. This human-in-the-loop validation ensures that the system remains safe and transparent, particularly in production or safety-critical environments.

The experiment will demonstrate the solution through real industrial use cases in hydrogen and battery testing. Industrial and research actors will be involved as early adopters to validate the practical applicability of the assistant in real test bench scenarios. The Digital Innovation Hub CARA will support industrial engagement, expert review, and dissemination within its network of manufacturing stakeholders.

The relevance of the experiment lies in its ability to address a clear industrial bottleneck: the complexity and cost of designing test procedures. By combining artificial intelligence with structured automation and human supervision, the solution aims to reduce setup time, improve consistency, and enhance reliability in industrial testing. This contributes directly to increased competitiveness, improved productivity, and safer digital transformation within European small and medium-sized enterprises.

The experiment addresses a structural challenge in industrial testing environments: the growing complexity, cost, and rigidity of test sequence design and management. In sectors such as hydrogen systems, battery technologies, and advanced manufacturing components, test procedures have become increasingly sophisticated. They often involve long sequences with conditional logic, loops, interdependencies between measurements, and strict safety constraints. Despite this complexity, the creation of these test sequences remains largely manual, relying on spreadsheets or custom scripts developed by highly specialised engineers.

From an operational perspective, this situation creates significant inefficiencies. Engineers spend a considerable amount of time translating technical requirements into structured sequences, validating logic, and debugging configuration errors. Each modification to a specification, standard, or customer requirement may require manual rewriting and revalidation of large parts of the sequence. This slows down project delivery, reduces responsiveness to market changes, and limits the scalability of testing activities.

Technically, the challenge lies in managing the increasing volume and interdependence of data within test procedures. Modern test benches integrate numerous sensors, actuators, and control variables that must be synchronised in real time. Ensuring coherence between parameters, thresholds, safety interlocks, and measurement conditions requires deep domain knowledge and careful validation. Manual configuration increases the risk of inconsistencies or omissions that can compromise the reliability of test results.

From a regulatory and compliance perspective, industrial testing environments,particularly in hydrogen and energy-related sectors,are subject to strict safety and quality requirements. Test procedures must be traceable, transparent, and reproducible. However, when sequences are manually defined and modified, maintaining full traceability becomes challenging. The absence of structured, standardised generation methods increases the risk of deviations and makes audits more complex.

Data management is another critical pain point. Test sequences are often defined in formats that are not fully interoperable with digital tools or documentation systems. This limits integration with broader digital transformation initiatives and prevents efficient reuse of test knowledge across projects or teams. As companies seek to adopt more digital and automated workflows, the gap between manual sequence definition and digital execution becomes increasingly problematic.

The challenge also has a strategic dimension. In fast-growing and innovation-driven sectors such as hydrogen and battery technologies, time-to-market is critical. The inability to rapidly configure and validate new test procedures can delay product qualification and industrialisation. Furthermore, the strong dependency on expert knowledge creates bottlenecks and exposes companies to operational risk if key personnel are unavailable.

Overall, the current situation reflects a mismatch between the increasing sophistication of industrial systems and the largely manual methods used to define and manage test procedures. Addressing this challenge is essential to improve efficiency, reduce costs, enhance safety and traceability, and support the digital transformation of industrial testing processes.

Objective 1:

The first objective of the experiment is to develop and integrate a Digital Intelligent Assistant into the GEMS test bench platform in order to automate the generation of complex industrial test sequences. This objective aims to enable engineers to describe test procedures in natural language or structured documents and automatically convert them into structured, executable sequences compatible with real-time industrial environments.

Objective 2:

The second objective is to ensure safe, transparent, and reliable use of artificial intelligence in industrial testing by implementing a visual sequence viewer and human-in-the-loop validation mechanism. This objective guarantees that all automatically generated sequences can be reviewed, verified, and adjusted by engineers before execution, ensuring compliance with operational and safety constraints.

Objective 3:

The third objective is to validate and demonstrate the solution in real industrial use cases, particularly in hydrogen and battery testing environments, and to prepare its replication through dissemination within the European Digital Innovation Hub ecosystem. This objective ensures that the developed solution is technically robust, economically relevant, and scalable for small and medium-sized enterprises across Europe.

The experiment is positioned within the advanced manufacturing and energy technology sectors, with a primary focus on hydrogen systems and battery technologies. These sectors are characterised by rapid technological evolution, strict safety requirements, and increasing pressure to accelerate qualification and validation processes. Industrial actors operating in these domains rely heavily on complex laboratory and pre-production test benches to validate components such as fuel cells, electrolyzers, battery cells, and fluidic systems before integration into larger systems or market deployment.

The experiment will be carried out in France, primarily at the premises of Gemesis, where test benches and the GEMS software platform are developed and validated. The operational environment includes laboratory and pilot-scale test systems used for component validation and system qualification. Demonstrations will take place in real testing conditions, where sequences are executed on physical test benches equipped with sensors, actuators, safety interlocks, and data acquisition systems. The experiment therefore operates in a realistic industrial setting rather than a purely simulated environment.

The target users of the solution are test engineers, laboratory operators, automation specialists, and research and development teams working in hydrogen, battery, and related advanced manufacturing sectors. Additional stakeholders include system integrators, industrial SMEs, and research laboratories that require flexible and reliable test automation tools. The Digital Innovation Hub CARA will support engagement with these stakeholders, ensuring alignment with real industrial needs and facilitating broader replication within European manufacturing ecosystems.

Several operational and technical constraints shape the context of the experiment. Hydrogen and battery testing environments are subject to strict safety standards due to high pressures, reactive gases, thermal risks, and electrical hazards. Test procedures must therefore comply with internal safety rules, traceability requirements, and, where applicable, certification frameworks relevant to energy and mobility applications. Any automated generation of test sequences must preserve full transparency and allow human validation before execution.

Integration constraints are also significant. The solution must be compatible with existing test infrastructure, including programmable logic controllers, data acquisition systems, and industrial communication protocols. It must support interoperability with existing software environments and data formats used in industrial testing. Access to data is controlled and limited to operational test parameters and documentation provided within secure environments, ensuring compliance with data protection and confidentiality requirements.

Overall, the experiment takes place in a demanding industrial context where safety, reliability, interoperability, and regulatory compliance are critical. This real-world setting ensures that the developed solution is robust, applicable, and directly relevant to industrial testing challenges faced by European small and medium-sized enterprises.

EXPECTED IMPACT

EXPECTED IMPACT

The expected impact of the experiment is a significant improvement in the efficiency, reliability, and scalability of industrial test sequence design and execution. By integrating a Digital Intelligent Assistant into the GEMS test bench platform, the experiment aims to transform a manual and expertise-dependent process into a structured, assisted, and traceable workflow. Success will be measured through quantifiable improvements in engineering efficiency, reduction of configuration errors, and validated industrial adoption.

From an operational perspective, the primary measurable impact concerns the reduction of test sequence preparation time. Today, complex sequences in hydrogen and battery testing environments may require several days or even weeks of engineering work depending on their complexity. The experiment targets a reduction of at least 50 percent in configuration time through automated sequence generation from natural language or structured documents. This will directly increase productivity and shorten project lead times.

A second measurable impact relates to error reduction and process consistency. Manual sequence creation increases the risk of configuration mistakes, inconsistencies, and logic gaps. By introducing structured generation combined with a visual sequence viewer and human validation, the experiment aims to reduce configuration-related errors and improve repeatability of test execution. Success will be evaluated through qualitative assessments and comparison of detected configuration issues before and after implementation.

For end users, including test engineers and laboratory operators, the solution is expected to simplify interaction with complex test systems. The assistant will lower the barrier to entry for less experienced users while maintaining expert-level control for advanced engineers. This improves labour efficiency and reduces dependency on a small number of highly specialised profiles, strengthening organisational resilience.

From a competitiveness standpoint, the experiment is expected to enhance Gemesis’ capacity to scale its solutions across multiple industrial sectors. Faster configuration and improved reliability will increase customer satisfaction and strengthen the company’s market positioning in hydrogen, battery, and advanced manufacturing applications. Industrial demonstrators and early adopters will provide tangible validation of the solution’s relevance.

In terms of sustainability, the experiment contributes indirectly to environmental performance by optimising testing workflows and reducing unnecessary test repetitions caused by configuration errors. More efficient validation cycles can shorten development time for energy technologies such as hydrogen and batteries, which are critical for the energy transition. Furthermore, the digitalisation of test procedures reduces reliance on paper-based documentation and improves structured data reuse.

The experiment will be considered successful if the defined performance indicators are achieved, including measurable reduction in setup time, validated industrial use cases, positive user feedback, and successful deployment of the solution through the European innovation ecosystem. Together, these impacts demonstrate not only technical feasibility but also economic relevance and long-term sustainability of the developed solution.